EDITORIAL

Is the inclusion of Randomized Controlled Trials data in clinical guides enough for the best evidence-practices? NO!

¿Es la inclusión de datos de Ensayos Controlados Aleatorizados en las guías clínicas suficiente para la mejor práctica basada en la evidencia?

Ismael San Mauro Martín, Elena Garicano Vilar

Research Centers in Nutrition and Health. Madrid, Spain

* Autor para correspondencia.

![]()

This work is licensed

under a Creative

Commons

Attribution-NonCommercial-ShareAlike 4.0 International License

La revista no cobra tasas por el envío de trabajos,

The practice of evidence-based medicine seeks to use individual expertise along with the best available clinical evidence, in a conscious, explicit and judicious manner, to make decisions about patient care. However, we are currently overwhelmed by an excess of information that is impossible to manage, added to the inexhaustible number of sources of information. In addition, the quality of information sources is uneven and in many cases poor. Therefore, keeping updated becomes a tedious task (1).

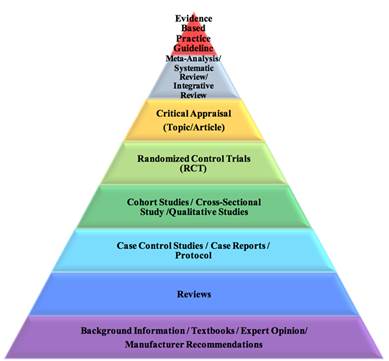

Figure 1. Evidence-based practice pyramid. Retrieved from (2).

The clinician faces daily diagnostic or therapeutic dilemmas without a clear response. It is then when the medical attitude varies markedly between some professionals and others when facing the same clinical scenario (e.g., a patient with well-defined specific characteristics). Although all clinical decisions are made under conditions of uncertainty, this will be greater or lesser depending on the quantity and quality of available evidence on the subject in question (1,3).

A consequence of this situation is clinical variability. Sometimes, the answer to the question that arises may not be known. Other times, there may not be studies that provide information on what the most appropriate attitude should be. However, in many cases the problem is that there is an excess of information, often contradictory, that makes its coherent integration difficult and the extraction of a clear conclusion difficult, and therefore, the elaboration of a well-defined response (1).

From the wide clinical variability observed and the difficulty to manage and integrate the abundant information that frequently exists on a certain medical problem, the need arose to have tools capable of offering the best information in a simple, fast and transparent way. Thus, the need for a "tool" that, based on a good and recent literature review, made practice based on the best available scientific evidence, improved healthcare quality and decreased unjustified variability became apparent (1). It is in this scenario that clinical practice guidelines appear in the 1990s, with the intention of improving the effectiveness of interventions and the quality of health care, and reducing variations in medical activity in the face of a specific process (4).

The clinical practice guidelines are considered a set of recommendations developed systematically to help professionals and patients in making decisions about the most appropriate health care, selecting the most appropriate diagnostic or therapeutic options in addressing a problem of health or a specific clinical condition. On the one hand, clinical practice guidelines constitute a link between research and clinical practice and, on the other, try to approximate recommendations to clinical reality (4).

The concepts of level of evidence and grade of recommendation form the central axis in the development of a guide, since they are the instruments that attempt to standardize and provide clinicians with solid rules to assess published research, to determine its validity and summarize its usefulness in clinical practice (5). The level of the evidence indicates the extent to which we can trust that the effect estimator is correct; in addition to giving importance to the design, the quality of the evidence is given by the methodology used, so that the more rigorous it is, the more valid and reliable the result of the investigation will be and, therefore, the greater the robustness of the recommendations that they derive from the synthesis of the studies. For its part, the strength of the recommendation means to what extent we can trust that implementing a recommendation will bring more benefits than risks (5).

There are classifications to evaluate and structure the evidence and establish the degrees of recommendation. The objective of the various classifications is to facilitate the assessment of the judgments behind the recommendations (6).

The evaluation of the evidence begins with the design of the studies. It is valued as high quality for randomized clinical trials, and as low quality for observational studies. However, in the case of randomized clinical trials, 5 aspects that may decrease quality are suggested, and in the case of observational studies, 3 circumstances that can increase it are indicated. Thus, among the aspects that may reduce the quality of randomized clinical trials are: a) limitations of the quality of the study itself (absence of concealment of the randomization sequence, inadequate blinding, significant losses, absence of analysis "by intention to try”, etc.); b) inconsistent results (very different estimates of the treatment effect, that is, presence of heterogeneity in the results); c) absence of direct evidence (e.g., when there are no direct comparisons between 2 treatments, but the available evidence comes from an indirect comparison of each drug versus placebo, or when there are large differences between the population where the clinical practice guideline is intended to be applied and that corresponding to the studies evaluated); d) inaccuracy (when available studies include few events and few patients and, therefore, the confidence intervals are wide), and e) notification bias (when there is reasonable doubt that the authors have not included all studies or all relevant outcome variables; this should be suspected, for example, if a few small and industry-funded studies are available) (7).

Finally, we must keep in mind that evidence of effectiveness is not always sufficient when establishing recommendations. For example, the development of treatment recommendations should include available information on individual circumstances, evidence of adverse effects, compliance and costs (8).

It is not always possible to generalize the conclusions of a clinical practice guideline to all patients. Sometimes, what is best for patients in general (which is what is recommended in the clinical practice guideline) may not be for a particular patient with specific characteristics (3).

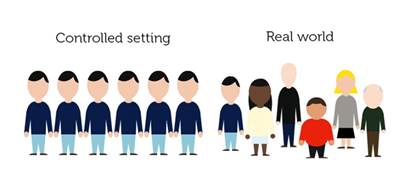

Figure 2. Real-World Evidence in Medicine Development. Retrieved from (9).

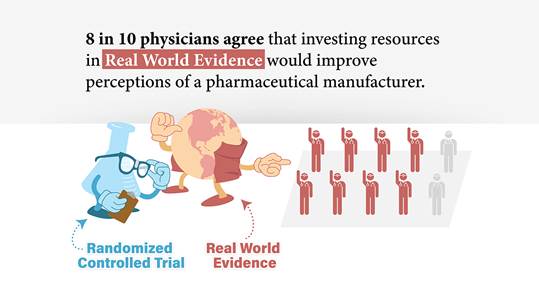

On the other hand, the latest trend on this topic is the real-world evidence (RWE) studies, which emerges as an alternative to all of the above. The importance of findings from these studies is growing and several researchers already advocate for the latter (10–14). Real-world studies seek to provide a line of complementary evidence and/or even expand the information to that provided by randomized controlled trials (RCTs) (10), as it can give vital insight into treatment effects in more diverse clinical settings, where many patients have multiple co-morbidities (15). They produce evidence of therapeutic effectiveness in real-world practice settings, while RCTs only provide evidence of efficacy (10). The outcomes achieved in clinical trials vs. those achieved in clinical practice could sometimes be dissimilar. The basis of this problem underlies in issues such as restrictive enrolment criteria, experimental design limitations, conflicts of interest, publication bias and biological variability (14). Therefore, the application of clinical trial results to clinical practice is often not straightforward. Key to the utility of real-world studies is their ability to complement data from RCTs in order to fill current gaps in clinical knowledge (10). The core aspect of RWE, compared with RCT, is its practical nature rather than a tightly controlled set group. The wide range of data already accumulated, including the electronic medical records (EMRs) as part of the real-world data (RWD), can broadly reflect actual practice (11). Despite the various advantages of RWE, only a few guidelines currently review RWD, and few actually use RWE to guide clinical practice recommendations (12). As the robustness of the information available from RWD increases, the value of RWD has been recognized by regulatory bodies such as the US Food and Drug Administration (FDA) and the European Medicines Agency (EMA).

Even insinuating the possible landing of the RWD in science, it is still possible to be critical and analyze this option globally. Are we prepared? Is it time? Is RWE actually better than the RCT? Many researchers are engaging in trial and error that may not overcome the various biases that occur in EMR-based RWE studies. While RWE can reflect the real world, there are still limitations to its acceptance (16). There are many hurdles in using RWE and solutions must be explored. The progress to increase data sharing is slow, but opening up and sharing healthcare data offers remarkable potential for improvements in care for individuals as well as potential for more effective use of limited healthcare resources (17).

Figure 3. Real World Evidence - Does it influence prescribing decisions? Retrieved from (18).

It is advisable that, as scientists, we explore new ways and methods against this problem, and/or combine the best of each one of them. It is expected that big data will help the progress of evidence-based clinical practice, making it real, with real-life circumstances and not only with the sometimes biased evidence of randomized clinical trials.

References

1. Gisbert JP, Alonso-Coello P, Piqué JM. ¿Cómo localizar, elaborar, evaluar y utilizar guías de práctica clínica? Gastroenterol Hepatol. 2008;31(4):239–57.

2. Virginia Commonwealth University. Nursing Evidence Based Practice Resources [Internet]. VCU Libraries Research Guides. 2019 [cited 2019 Sep 27]. Available from: https://guides.library.vcu.edu/ebpsteps/evidencesummaries

3. Cabana MD, Rand CS, Powe NR, Wu AW, Wilson MH, Abboud PAC, et al. Why don’t physicians follow clinical practice guidelines?: A framework for improvement. J Am Med Assoc. 1999;282:1458–65.

4. Poblano-Verástegui O, Vieyra-Romero WI, Galván-García ÁF, Fernández-Elorriaga M, Rodríguez-Martínez AI, Saturno-Hernández PJ. Calidad y cumplimiento de guías de práctica clínica de enfermedades crónicas no transmisibles en el primer nivel. Salud Publica Mex. 2017;59(2):165–75.

5. Harbour R, Miller J. A new system for grading recommendations in evidence based guidelines. Br Med J. 2001;323:334–6.

6. Grading of Recommendations Assessment Development and Evaluation working group. GRADE [Internet]. [cited 2019 Sep 13]. Available from: http://www.gradeworkinggroup.org/

7. Oxman AD. Grading quality of evidence and strength of recommendations. Br Med J. 2004;328(7454):1490.

8. Aymerich M, Sánchez E. From scientific knowledge of clinical research to the bedside: clinical practice guidelines and their implementation. Gac Sanit. 2004;18:326–34.

9. GetReal. Real-World Evidence in Medicine Development [Internet]. 2017 [cited 2019 Sep 27]. Available from: https://www.imi-getreal.eu/News/ID/85/GetReal-online-course-Real-World-Evidence-in-Medicine-Development--2-Oct--12-Nov-2017

10. Blonde L, Khunti K, Harris SB, Meizinger C, Skolnik NS. Interpretation and Impact of Real-World Clinical Data for the Practicing Clinician. Adv Ther. 2018;35(11):1763–1774.

11. Kim HS, Lee S, Kim JH. Real-world evidence versus randomized controlled trial: Clinical research based on electronic medical records. J Korean Med Sci. 2018;33(34):e213.

12. Katkade VB, Sanders KN, Zou KH. Real world data: An opportunity to supplement existing evidence for the use of long-established medicines in health care decision making. J Multidiscip Healthc. 2018;11:295–304.

13. Khosla S, White R, Medina J, Ouwens M, Emmas C, Koder T, et al. Real world evidence (RWE) - a disruptive innovation or the quiet evolution of medical evidence generation? [version 1; referees: 2 approved]. F1000Research. 2018;7:111.

14. Zarbin M. Real Life Outcomes vs. Clinical Trial Results. J Ophthalmic Vis Res. 2019;14(1):88–92.

15. Fortin M, Dionne J, Pinho G, Gignac J, Almirall J, Lapointe L. Randomized controlled trials: Do they have external validity for patients with multiple comorbidities? Ann Fam Med. 2006;4(2):104–8.

16. Kim HS, Kim JH. Proceed with caution when using real world data and real world evidence. J Korean Med Sci. 2019;34(4):e28.

17. Graham S, McDonald L, Wasiak R, Lees M, Ramagopalan S. Time to really share real-world data? F1000Research. 2018;7:1054.

18. Azam A. Real World Evidence - Does it influence prescribing decisions? [Internet]. MD Analytics. 2018 [cited 2019 Sep 27]. Available from: https://www.mdanalytics.com/2019/05/28/real-world-evidence-infographic/